E3SM Time Integration, Flux Calculation and Field Transfer

Terminology:

The coupler is a component that performs operations such as interpolation, time averaging or merging.

The driver is the "main" that runs on all processors and controls the overall integration of the coupled model.

A field is a quantity involved in the coupler data flow. Surface temperature, heat flux, downward longwave radiation are all examples of fields.

In CIME, the coupler and driver are referred to as "cpl7". cpl7 uses MCT datatypes to store all data in the coupler and MCT methods to perform communication and interpolation (from pre-computed weights).

See also CIME Key Terms and Concepts

Driver/Coupler Code pointers

There have been versions of the coupler in the E3SM parent model, CESM. The most recent is called "cpl7" and is written using MCT datatypes and methods.

The MCT-based version of cpl7 is in https://github.com/ESMCI/cime/tree/master/src/drivers/mct/main

To use the cpl7, each component must instantiate cpl7 datatypes and use cpl7 methods to communicate with the coupler/driver (these are mostly wrappers around MCT datatypes and methods). The datatypes store all transferred data in memory.

Example: EAM's code to do this is located in https://github.com/E3SM-Project/E3SM/blob/master/components/eam/src/cpl/atm_comp_mct.F90

At a runtime-selectable frequency, each component will communicate with the coupler, sending data it has calculated. At the same frequency, but at possibly a different place in the component's call sequence, it will receive data from the coupler

Explicit integration sequence with atmosphere-ocean described

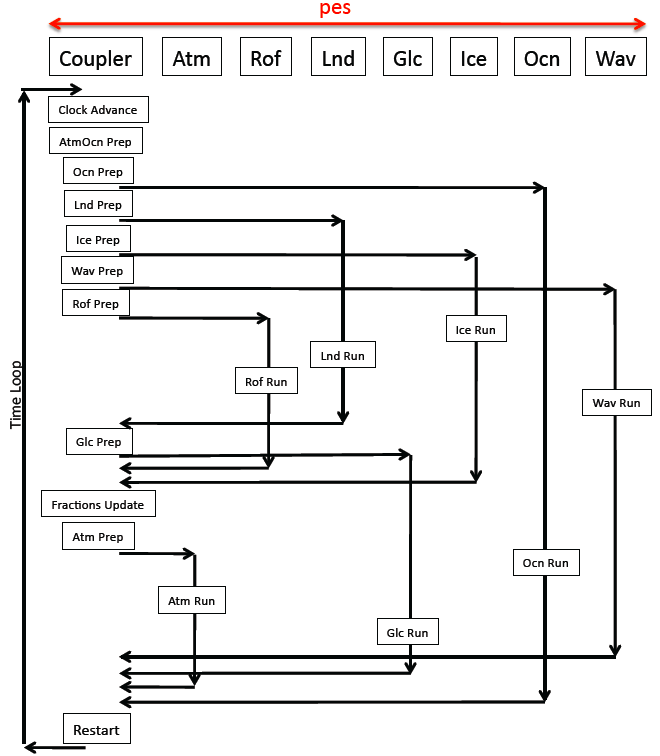

The driver calls each model's run method and the various coupler functions in a fixed sequence. The driver code allows for a few slightly-different sequences that can be selected at runtime using the CPL_SEQ_OPTION in env_run.xml. The sequence used by E3SM in coupled runs is RASM_OPTION1 and illustrated below:

The above diagram is written as if each component is running on a distinct set of MPI tasks. In most real cases, the ocean is the only component on its own tasks. The other components share a separate pool of tasks to various degrees. The exact layout is controlled by the env_mach_pes.xml file in the case directory. The driver gives each component an MPI communicator with its tasks. The driver also defines several other communicators which are the union of each pair of component's tasks with the coupler. Nearly every call in the driver is in "if (communicator)" blocks to control what is called on which set of processors. This allows the driver to run various components concurrently if the processor layout allows it.

("prep" stands for "prepare" and those routines ensure all the data needed for the component is ready for next time its run method is called.)

Read the above diagram for atmosphere-ocean as follows: at the start, the coupler has atmosphere and ocean data from either the previous step or the initialization sequence. On the coupler tasks, the driver calculates atmosphere-ocean fluxes on the ocean grid (AtmOcn Prep). The driver then calls, on the coupler tasks, "Ocn Prep" where any additional data the ocean needs (such as ice-ocean fluxes from the sea-ice model or river runoff) are merged (using a simple weighted sum with area fractions) so that the ocean gets one combined heat, freshwater or momentum flux for each of its grid points.

The driver then calls a routine that transfers data from the coupler to the ocean (the horizontal line from "Ocn Prep" to "Ocn Run") on a communicator that is the union of coupler and ocean tasks. This blocks (on that communicator) until finished.

The driver then calls the ocean's run method. The ocean will execute some number of time steps internally using the surface forcing provided by the coupler.

When the ocean finishes, it waits for the driver to call a method that transfers all the needed ocean data back to the coupler (the horizontal line going from "Ocn Run" and pointing to the left). If the driver gets to this method before the ocean is finished, the union of coupler and ocean tasks will wait. (Load balancing in the coupled model tries to allocate processors to components to minimize this wait time.)

Meanwhile, after the driver calls the ocean's run method, it can still do things on the non-ocean tasks. It receives data from other components (land, sea-ice). When the driver calls "Atm Prep" on the coupler processors, "Atm Prep" will have all the data from other components that has been transferred to the coupler (in MCT datatypes). It will perform any interpolation necessary to put that data on the atmosphere grid. It will then merge the input from multiple surface components so each atmosphere grid point has a single value for heat, water, momentum flux. The driver will then transfer the data to the atmosphere (on the union of atmosphere-coupler processors).

The driver will then call the atmosphere run method (on the atmosphere tasks). The atmosphere executes some number of time steps. While the atmosphere is running, the driver calls the routine to transfer data from the ocean to the coupler (on the union of coupler and ocean tasks). The driver will then call the routine to send data from the atmosphere to the coupler (on the union of atmosphere and coupler tasks).

At this point, we are at the bottom of the run sequence and we return to the top where the coupler has the atmosphere and ocean data it has received from the previous iteration.

Time step and coupler frequency control

How often each component sends/receives data to/from the coupler is first determined by the NCPL_BASE_PERIOD. This is almost always 1 day and is set in env_run.xml.

The coupler communication frequency is expressed as an integer number of times per NCPL_BASE_PERIOD. The atmosphere's coupling frequency is denoted by NCPL_ATM in the xml config files. The frequency depends on the component set and the grid and the options are in the driver's config_component.xml seen in https://github.com/E3SM-Project/E3SM/blob/maint-1.0/cime/src/drivers/mct/cime_config/config_component_e3sm.xml

In a fully coupled case with 1-degree resolution in the atmosphere, the NCPL_ATM =48. With a base period of 1 day, the atmosphere communicates with the coupler every 30 simulated minutes. The longest time step for the atmosphere (dtime) is also set to 30 minutes. (The "dtime" variable in the atmosphere namelist is ignored). The atmosphere may (and often does) subcycle parts of its solve within that 30 minutes.

As resolution in the atmosphere increases, the coupler frequency increases. For ne120, the coupler frequency is set to 96 times per day (15 minutes). The atmosphere's time step is also then 15 minutes.

The driver contains code for time-averaging the data to be sent to a component if the coupler frequencies don't match. For example if NCPL_OCN is set to 3 hours while the NCPL_ATM is 1 hour, the coupler will accumulate the atmosphere ocean fluxes calculated every time the atmosphere talks to the coupler and sends the ocean the average.

In the current implementation, the coupling period must be identical for the atmosphere, sea ice, and land components. The ocean coupling period can be the same or greater. The runoff coupling period should be between or the same as the land and ocean coupling period. All coupling periods must be multiple integers of the smallest coupling period and will evenly divide the NCPL_BASE_PERIOD. No component model can have a time-step longer then its coupling period.

Flux calculation

The atmosphere-ocean fluxes are unique in being calculated in the coupler. This was done because for a long time the ocean only talked to the coupler once a day but the atmosphere needed fluxes that changed with the changing lower-atmosphere-level properties. Also the fluxes are calculated on the high-resolution (ocean) grid. So in the coupler, SST was held fixed and new heat and momentum fluxes would be calculated as new atmosphere properties were sent to the coupler (and interpolated to the ocean grid). The fluxes are interpolated back to the atmosphere grid and sent to the atmosphere. Meanwhile, the fluxes (on the ocean grid) are summed and then averaged before sending to the ocean.

All other inter-component fluxes are calculated in a component. Atmosphere-land fluxes are calculated in the land model. Atmosphere-sea-ice fluxes are calculated in the sea-ice model. Land-river fluxes are calculated in the land model.

Defining and setting fields to be transferred

The fields that are exchanged between components and the coupler are hard-coded in text strings in a file called seq_flds_mod.F90 (see https://github.com/ESMCI/cime/blob/master/src/drivers/mct/shr/seq_flds_mod.F90)

The colon-separated strings are parsed by MCT to figure out how much space to allocate in its datatypes to hold the data (by counting substrings between colons) and the substrings are used to find specific values with an MCT "Attribute Vector".

There is a base number of fields always passed between components in cpl7. If you want to add new ocean model to E3SM, you have to make sure it at least supplies values for the base fields. The field list can be extended for certain compsets such as those involving biogeochemistry. For example if "flds_bgc_oi" is TRUE, a bunch of fields are added for biogeochemical interactions between ocean and sea ice.

The resulting string is output to the cpl.log when running. Here is a typical list of fields sent from the atmosphere to the coupler:

(seq_flds_set) : seq_flds_a2x_states= Sa_z:Sa_topo:Sa_u:Sa_v:Sa_tbot:Sa_ptem:Sa_shum:Sa_pbot:Sa_dens:Sa_pslv:Sa_co2prog:Sa_co2diag (seq_flds_set) : seq_flds_a2x_fluxes= Faxa_rainc:Faxa_rainl:Faxa_snowc:Faxa_snowl:Faxa_lwdn:Faxa_swndr:Faxa_swvdr:Faxa_swndf:Faxa_swvdf:Faxa_swnet: Faxa_bcphidry:Faxa_bcphodry:Faxa_bcphiwet:Faxa_ocphidry:Faxa_ocphodry:Faxa_ocphiwet:Faxa_dstwet1:Faxa_dstwet2:Faxa_dstwet3: Faxa_dstwet4:Faxa_dstdry1:Faxa_dstdry2:Faxa_dstdry3:Faxa_dstdry4

The strings are separated into "states" and "fluxes" because each group is interpolated with different weights in the coupler (and so they will be in separate MCT Attribute Vectors, 2 mapping calls will be made)

The substrings have a format of "<source>_<variable>". "Faxa_rain" means a Flux from the atmosphere "a" to the coupler "x" on the atmosphere grid. In the coupler, this field will be interpolated to create "Faxo_rain". The "source" string is more useful in the coupler where variables on several grids from several components exist at once.

When sending to or receiving from the coupler, a component must do a deep copy between its internal data type and the cpl7 datatype (the MCT attribute vector). In the atmosphere, you can see this copy in/out done in https://github.com/E3SM-Project/E3SM/blob/master/components/eam/src/cpl/atm_import_export.F90. Here are a few lines from the code that copies data in to cpl7 data types for sending to the coupler.

ig=1

do c=begchunk, endchunk

ncols = get_ncols_p(c)

do i=1,ncols

a2x(index_a2x_Sa_pslv ,ig) = cam_out(c)%psl(i)

a2x(index_a2x_Sa_z ,ig) = cam_out(c)%zbot(i)

a2x(index_a2x_Sa_u ,ig) = cam_out(c)%ubot(i)

a2x(index_a2x_Sa_v ,ig) = cam_out(c)%vbot(i)

a2x(index_a2x_Sa_tbot ,ig) = cam_out(c)%tbot(i)

a2x(index_a2x_Sa_ptem ,ig) = cam_out(c)%thbot(i)

ig=ig+1

end do

end do

The coupled model developer must know how to find the correct fields in the models native data types (cam_out in this example) and then how to copy its values in the right order in to the cpl7 datatype (a2x).

Once data has been copied in to the cpl7 data types, the coupler can perform all necessary interpolation and data transfer to get the data to the components that need it. The sending component, in this case the atmosphere, needs to send the union of all requested data but doesn't have to know where it all goes. Similarly, the coupler expects to receive good values for all this data but doesn't need to know if from a "real" model or from a "data model" that is reading it from files.

In cpl7, all data transfer is done within memory using MPI (no "coupling by files").

The MCT "Rearranger" is the method used to move data between coupler and components. The Rearranger has options to use either multiple MPI_Send/Recvs or mpi_alltoall. For a more complete explanation of how MCT uses its GlobalSegmentMap and AttributeVectors to do parallel communication, see https://journals.sagepub.com/doi/abs/10.1177/1094342005056116

Time integration in other components

The atmosphere model: Timestepping within the ACME v1 Atmosphere Model